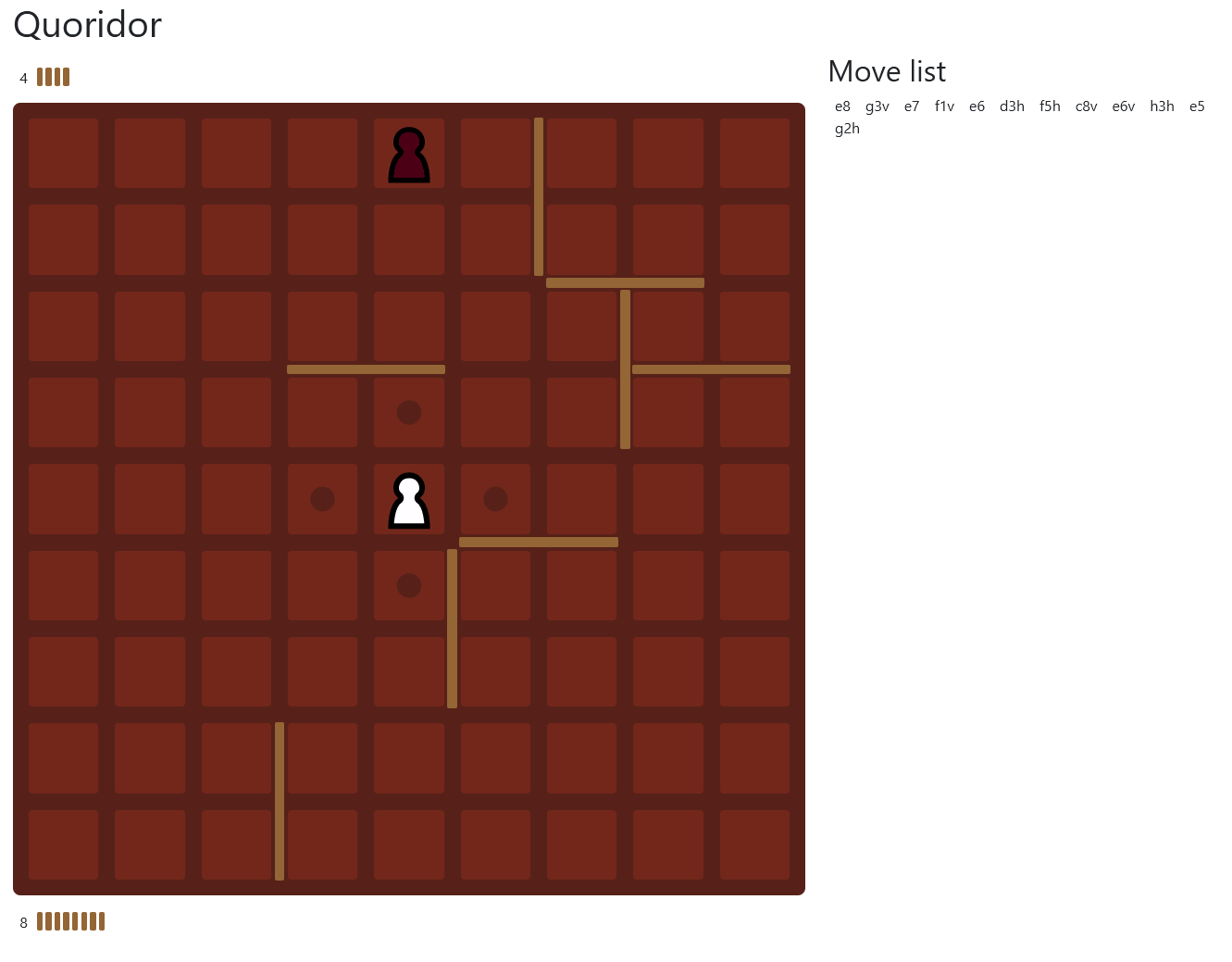

Quoridor

Quoridor is an abstract game where both players compete to move their token to the opposite side of the board first. Each turn, players may either move or place a wall so long as it does not completely cut off their opponent’s path to the end.

This project is an implementation of a UI and an attempt at an AI for playing the game built on Vue and OpenSpiel. All the game logic is controlled by OpenSpeil’s implementation of the game.

Architecture

The backend and frontend communicate over an API with the following methods: initial-state, next-state (plays a move), and best-move (performs AI inference). The state given back to the UI includes a FEN, move history, player locations, and number of walls for the UI to render. Since OpenSpiel game states cannot be serialized directly, the history is used to recreate the game state on each API call.

The UI supports both desktop and mobile and can either be played with one or two human players, although the demo only allows one human player and a very weak AI.

AI

Rather than using traditional methods for game AI, this project uses alpha-beta search with AlphaZero as an evaluation function. Training this proved to be slow since I was unable to get my GPU to play nicely with OpenSpeil running in docker.

Initially, the models would place walls randomly, likely because most available moves are wall placements. After some time, I was able to produce a model which understood that their token needed to walk forward in order to win, and that this was more important than placing walls. However, this model would place no walls at all and enter a loop during self-play where both tokens would shuffle back and forth.

I would like to revisit this project with a few improvements:

- Use GPU training

- Handle the shuffling case by either declaring a draw or a loss after N moves with no wall placements

- Use hand-coded evaluation logic for endgames in order to significantly reduce the depth needed to search

- Construct a heuristic to better direct search. Choosing one which is admissable is difficult, obvious heuristics like “distance to goal” are not because a wall can be placed by the opponent and increase the heuristic’s value.

Links

- Play Quoridor (cold starts are very bad, refresh after a bit)

- Source